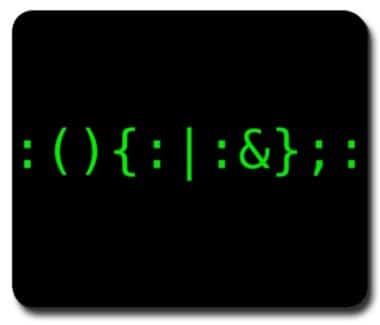

This puzzle reveals that you don’t need to write a few lines of shell scripts, you would just need one line (using pipes) to achieve complex tasks. See below:

Write a bash script to calculate the frequency of each word in a text file words.txt.

For simplicity sake, you may assume:

words.txt contains only lowercase characters and space ‘ ‘ characters.

Each word must consist of lowercase characters only.

Words are separated by one or more whitespace characters.

For example, assume that words.txt has the following content:the day is sunny the the

the sunny is is

Your script should output the following, sorted by descending frequency:

the 4

is 3

sunny 2

day 1

Note:

Don’t worry about handling ties, it is guaranteed that each word’s frequency count is unique.

Submit your solution at Leetcode: https://leetcode.com/problems/word-frequency/

We are interested in one-line solutions only, a shell script (multiple line) reading each line, parsing each words and sort them would be straightforward although.

Solution – cat, tr, awk, sort

cat words.txt | tr -s ' ' '\n' | awk '{nums[$1]++}END{for(word in nums) print word, nums[word]}' | sort -rn -k2

Solution – grep, sort, uniq, sort, awk

grep -oE '[a-z]+' words.txt | sort | uniq -c | sort -r | awk '{print $2" "$1}'

Solution, sed, grep, sort, uniq, sort, awk

sed -r 's/\s+/\n/g' words.txt | grep -v "^$" | sort | uniq -c | sort -r | awk '{print $2" "$1}'

Solution – awk and sort

awk '{words[$1]+=1} END{for(word in words){print word,words[word]}}' RS="[ \n]+" words.txt | sort -nrk2

Solution cat and awk

cat words.txt | awk '{for(i=1;i<=NF;++i) { arr[$i]++; } } END { x=0; for(var in arr) {newarr[arr[var]]=var; if(arr[var]>x) x=arr[var];} for(i=x;i>0;--i) if (newarr[i] > 0) print newarr[i] " "i; }'

Solution – tr, sort, uniq, sort, awk

tr -s ' ' '\n' < words.txt|sort|uniq -c|sort -nr|awk '{print $2, $1}'

Solution - sed

cat words.txt | tr -s '[[:space:]]' '\n'| sort | uniq -c | sort -r | sed -r -e 's/[[:space:]]*([[:digit:]]+)[[:space:]]*([[:alpha:]]+)/\2 \1/g'

There is a famous quote: Where there is a shell, there is a way. Share on X

Common Intermediate Results

Among the above solutions, many share the same intermediate solutions.

The most important step is to parse the words and print them line by line, so we can use:

sed -r 's/\s+/\n/g' words.txt

or

cat words.txt | tr -s ' ' '\n'

or

grep -oE '[a-z]+' words.txt

and they will all print out:

the

day

is

sunny

the

the

the

sunny

is

is

if there are addition empty lines at the end (print-out), you can use grep -v "^$" (-v means invert match) to select only non-empty lines. Then we can sort the output to put all relevant words together (if specified -r, it will revert the sorting results).

sed -r 's/\s+/\n/g' words.txt | grep -v "^$" | sort

produces:

day

is

is

is

sunny

sunny

the

the

the

the

then applying the uniq -c which counts the output.

1 day

3 is

2 sunny

4 the

We are getting close, so you can sort this again (better specifying the -r).

4 the

3 is

2 sunny

1 day

Then, just pipe to awk which is made for splitting the columns and print out.

awk '{print $2" "$1}'

produces the answer

the 4

is 3

sunny 2

day 1

--EOF (The Ultimate Computing & Technology Blog) --

795 wordsLast Post: The Weird Thing about Javascript - Part I

Next Post: Case Study: use PHPQuery to Crawl 3000 images from Tumblr

Most of the above solutions fail for real-life texts which may include uppercase letters and letters outside the English alphabet. A more encompassing solution to tokenization is the following:

grep -Eow ‘\w+’ words.txt

With case folding:

grep -Eow ‘\w+’ words.txt | awk ‘{ print tolower($0) }’

And finally word frequencies:

grep -Eow ‘\w+’ words.txt | awk ‘{ print tolower($0) }’ | sort | uniq -c | sort -nr

If desired, the columns with frequencies and words can switch places with:

| awk ‘{ print $2 ” ” $1 }’

Thank you. True, the Unicode letters are tricky.